Devops Home

Continuous Monitoring of Cloudwatch Dashboards by Integrating with Slack

Sagar Mallya, Cloud Engineer, STG

Introduction

Setup continuous monitoring of Cloudwatch dashboards for critical application by sharing important metrics widgets to Slack at regular interval and whenever new version of application is deployed.

What are Cloudwatch Dashboards?

Cloudwatch dashboards provide a customizable page to view all the important metrics across different AWS services and regions in a single view. This provides bird’s eye view of health metrics for stacks across an application.

Pre-requisites

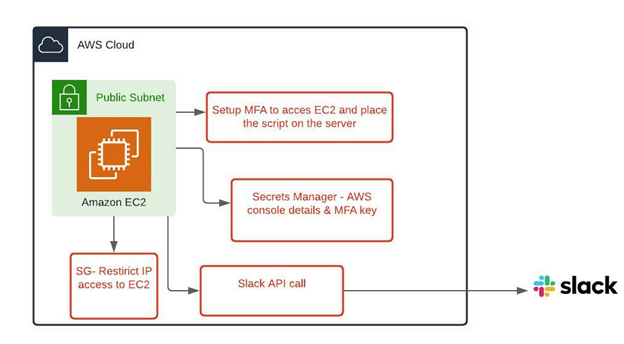

- An AWS console user for monitoring: Create an IAM user with a policy attached to allow read-only access to Cloudwatch metrics. Enable MFA authentication and store the keys in Secrets Manager.

-

EC2 instance: We need to setup an EC2 instance to securely run the

Python+Selenium script. Below action needs to be performed on EC2:

- Attach a role with a policy allowing access to Secrets Manager.

- Setup Google Authenticator for MFA on EC2 and keypair for authentication.

- Restrict access to EC2 by limiting to specific IP address in security group.

-

Install below set of packages on EC2 instance.

- Boto3: AWS SDK to access AWS services.

- pyotp: This package is used for setting up multi-factor authentication on console user.

- Chrome webdriver/Selenium package: Chrome web browser is used to launch the AWS console and login and capture dashboard screenshot.

- Create a bot on the Slack admin console and provide read access to channels to which widget images need to be shared. The bot will have an authentication token associated with it.

-

Create a Secrets Manager secret to securely store below set of data.

- Access/secret key generated for MFA while creating console user.

- AWS console username/password.

Design python-selenium script

We needed to write a script to securely access the AWS console and navigate to dashboard setup to capture the screenshots and share it across the Slack channel. A high level design of script should look like the following:

import time

import pyotp

import boto3 retrieve

def handler(event, context):

browser = webdriver.Chrome()

browser.set_viewport_size(1980,960)

browser.maximize_window()

browser.get('https://test1.signin.aws.amazon.com/console')

username = #retrieved from secrets manager

password = #retrieved from secrets manager

browser.save_screenshot("image1.png")

# username send

a = browser.find_element_by_xpath("//*[@id='username']")

a.send_keys(username)

# password send

b = browser.find_element_by_xpath("//*[@id='password']")

totp = pyotp.TOTP(base32secret)

print('OTP code:', totp.now())

browser.get('https://ap-south-1.console.aws.amazon.com/cloudwatch/home?

region=ap-south-1#dashboards:name=Test')

time.sleep(30)

browser.save_screenshot("image3.png")

#l = browser.find_element_by_xpath("//*[text()='CPUUtilization 1']")

curl -F file=@dramacat.gif -F "initial_comment=Shakes the cat" -F channels=C024BE91L,D032AC32T -H "Authorization: Bearer xoxa-xxxxxxxxx-xxxx" https://slack.com/api/files.upload

Run the script at a regular interval on EC2

- Run as per pre-defined schedule: Setup a cron job on EC2 instance to run the script every 3 hours to capture the latest data from dashboards to share it on Slack channel.

- Share the metrics on new deployment: Create an EventBridge rule to trigger on successful deployment of code pipeline, the EventBridge rule will trigger another Lambda function to create a session on EC2 using Session Manager and use a document to run the command and share the images across Slack channel.